José Luis Oliveira

Universidade de Aveiro, DETI / IEETA

3810-193 Aveiro, Portugal

jlo@ua.pt

(+351) 234 370 500

About

AboutGTO is a toolkit for genomics and proteomics, namely for FASTQ, FASTA and SEQ formats, with many complementary tools. The toolkit is for Unix-based systems, built for ultra-fast computations. GTO supports pipes for easy integration with the sub-programs belonging to GTO as well as external tools. GTO works as LEGOs, since it allows the construction of multiple pipelines with many combinations. GTO includes tools for information display, randomisation, edition, conversion, extraction, search, calculation, compression, simulation and visualisation. GTO is prepared to deal with very large datasets, typically in the scale of Gigabytes or Terabytes (but not limited).

Citation

Citation Download

DownloadTiago Almeida, “Neural Information Retrieval for Biomedical Question-Answering”

July 2019

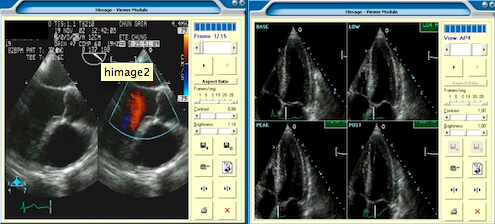

Eduardo Miguel Coutinho Gomes de Pinho, “Multimodal Information Retrieval in Medical Imaging Repositories”

July 2019

Funding entity: FCT Period: 2018-2021

Funding entity: FCT Period: 2018-2021 D4 proposes the use of state-of-the-art Deep Learning methods to tackle the challenges identified in each of the initial stages of the drug discovery pipeline. The main contribution of this project is the creation of an improved computational pipeline that uses Deep Learning architectures to support the drug discovery process. The pipeline will be implemented within a framework that will be available to the community. Both the final platform and the computational methods will be validated with the close collaboration of the industrial partner, which will apply it to develop novel therapeutics for neurodegenerative amyloid diseases.Funding entity: H2020/IMI-JU Period: 2018-2023

Funding entity: H2020/IMI-JU Period: 2018-2023 Presently, Europe is generating unprecedented amounts of patient-level information contained in Electronic Health Record (EHR) systems and other types of health databases. This includes structured data in the form of diagnoses, medication, laboratory test results, etc., and unstructured data in clinical narratives, all of which likely contain invaluable insights into the natural history and burden of disease, its clinical management and outcomes, and wider perspectives on both healthcare and the patient experience of it. It is our ambition to fully leverage these vast volumes of data to improve clinical practice and individual patient outcomes by increasing our understanding of disease and treatment pathways and effects. We will galvanize transparent and reproducible analytics that will generate valid real-world evidence to improve patient care and enable medical outcomes-based research at an unprecedented scale. The Electronic Health Data and Evidence Network (EHDEN) consortium will provide the infrastructure and eco-system to make this ambition come true, supporting the disease-specific projects in the IMI Big Data for Better Outcomes (BD4BO) programme, academia, pharmaceutical, and life sciences, regulatory and allied institutions.Fernanda Brito Correia, “Prediction And Analysis Of Biological Networks Structure And Dynamics”

April 2019

João Rafael Almeida, “Software solution for clinical protocol management”

18 Jul, 18.00h

The work entitled “eQTL analysis of the NAPRT locus” won the Best Poster Award at the 18th Portugaliæ Genetica – Genetic Diversity in Structure and Regulation, that was held on 22-23rd of March 2018 in Porto. The work was carried out by PhD. student Sara Duarte-Pereira, researcher Sérgio Matos, Professors José Luís Oliveira and Raquel M. Silva from the Institute of Electronics and Informatics Engineering of Aveiro (IEETA).

Diogo Pratas was granted Best Oral Presentation Award at the 18th Portugaliæ Genetica – Genetic Diversity in Structure and Regulation, held on 22-23rd of March 2018 in Porto. The work presented is entitled “Metagenomic composition analysis of ancient DNA samples”.

The DICOM validator is a web-based solution for evaluation the compliance of PACS applications with the DICOM standard. It features the “as-a-service” business model, which allows users to immediately reach their goals without the extensive setup efforts required by similar solutions. The DICOM Validator is also a community-driven initiative, where users all around the world are invited to contribute to the creation and maintenance of the DICOM module definitions. With your help, we will soon reach full coverage of the DICOM Standard and keep-up with its latest revisions.

Check us at: https://bioinformatics.ua.pt/dicomvalidator/

Funding entity: Centro 2020

Period: 2017-2020

The sensing of the person in physical context enables personalized and predictive responses, and is a major step towards a smarter and safer environment. The main objective of SOCA is to create an open innovation ecosystem where data is gathered from multiple sources, processed, integrated, and made available for applications and users, and that is able to create a service sphere able to assist every individual inside it – from personal health to routine daily chores. For this endeavor, the academic campus will provide the perfect framework to support and trial innovations on the smart city and on the assisted living arenas.

SCALEUS is a data migration tool that can be used on top of traditional systems to enable semantic web features. This user-friendly tool help users easily create new semantic web applications from scratch. Targeted at the biomedical domain, this web-based platform offers, in a single package, a high-perfomance database, data integration algorithms and optimized text searches over the indexed resources. SCALEUS is available as open source at http://bioinformatics-ua.github.io/scaleus/.

SCALEUS is a data migration tool that can be used on top of traditional systems to enable semantic web features. This user-friendly tool help users easily create new semantic web applications from scratch. Targeted at the biomedical domain, this web-based platform offers, in a single package, a high-perfomance database, data integration algorithms and optimized text searches over the indexed resources. SCALEUS is available as open source at http://bioinformatics-ua.github.io/scaleus/.

Ann2RDF is an interoperable semantic layer that unifies text-mining results originated from different tools, information extracted by curators, and baseline data already available in reference knowledge bases, enabling a proper exploration using semantic web technologies. This result in a more suitable transition process, in which desired annotations are enriched with the possibility to be shared, compared and reused across semantic Knowledge Bases. Ann2RDF is available at http://bioinformatics-ua.github.io/ann2rdf/.

Ann2RDF is an interoperable semantic layer that unifies text-mining results originated from different tools, information extracted by curators, and baseline data already available in reference knowledge bases, enabling a proper exploration using semantic web technologies. This result in a more suitable transition process, in which desired annotations are enriched with the possibility to be shared, compared and reused across semantic Knowledge Bases. Ann2RDF is available at http://bioinformatics-ua.github.io/ann2rdf/.

I2X is a reactive and event-driven framework that simplifies and automates real-time data integration and interoperability. This platform streamlines the creation of customizable integration tasks connecting heterogeneous data sources with any kind of services. Integration is poll-based, with intelligent agents monitoring data sources, or push-based, where the platform waits for data submission by external resources. I2X delivers data to services through a comprehensive template engine, where the platform maps data from the original data source to the destination resources. I2X is an open-source framework available online at https://bioinformatics.ua.pt/i2x/.

Task management systems are crucial tools in modern organizations, by simplifying the coordination of teams and their work. Those tools were developed mainly for task scheduling, assignment, follow-up, and accountability. On the other hand, scientific workflow systems also appeared to help putting together a set of computational processes through the pipeline of inputs and outputs from each, creating in the end a more complex processing workflow. However, there is sometimes a lack of solutions that combine both manually operated tasks with automatic processes, in the same workflow system.

TASKA is a web-based platform that incorporates some of the best functionalities of both systems, addressing the collaborative needs of a task manager with well-structured computational pipelines.

The system is currently being used by EMIF (European Medical Information Framework) for the coordination of clinical studies.

A demo installation of TASKA is available online at https://bioinformatics.ua.pt/taska

MONTRA is a rapid-application development framework designed to facilitate the integration and discovery of heterogeneous objects which may be characterized by distinct data structures. Initially designed as a framework which allows biomedical researchers to easily set up dynamic workspaces, where they can publish and share sensitive information about their data entities, MONTRA is suitable for any data domain, by allowing the characterisation of the most diverse entities or group of entities (datasets). Through the use of a common skeleton, it automatically generates a fully-fledged web data catalogue, ensuring data privacy protection.

MONTRA is being used by several European projects, and its source code is publicly available at https://github.com/bioinformatics-ua/montra.

Funding entity:P2020/PAC

Period: 2016-2019

Heart failure (HF) is a highly prevalent syndrome of impaired cardiac function that constitutes the main cause of hospitalization and disability amongst the elderly, a leading cause of mortality, morbidity and resource consumption. HF with preserved ejection fraction (HFpEF) is characterized by preserved ejection, impaired cardiac filling, lung congestion and effort intolerance, accounting for a rising proportion of over 50% of cases due to ageing and increasing incidences of systemic arterial hypertension (SAH), obesity and diabetes mellitus (DM). The current proposal sets forth to address this issue by a mixed strategy of discovery science approach through comprehensive multi-omics studies in plasma and tissues from HFpEF patients and animal models with and without comorbidities (DM, SAH and obesity), and an hypothesis-driven approach focusing on disturbances of cell function and communication in endothelial cells (EC), cardiac fibroblasts (CF), adipocytes and CM. A holistic view of HFpEF and of the role of comorbidities will be achieved by correlating and integrating transcriptomics, proteomics and lipidomics studies with clinical data. The impact on CM and myocardium will be comprehensively assessed in vitro and in vivo. Finally, preclinical testing of functional foods, synthetic antioxidants, enhanced bioavailability putative therapeutic molecules as well as other potentially effective gene targets identified along the project’s course will be assayed.

Funding entity:FCT

Period: 2016-2019

Digital medical imaging systems are, nowadays, essential tools in clinical practice, both in decision supporting and in treatment management. The main objective of this project is to investigate new solutions for extracting, merging and searching over multimodal data, including text (DICOM metadata and diagnosis reports) and image information. Relevance feedback will be also investigated to increase the results quality of the proposed multimodal architecture. It is also our aim to investigate the contribution of semantic information in imaging retrieval and information extraction. We will develop a semantic PACS concept to provide search functionality using context-dependent semantic information.

Funding entity:FCT (CMU-Portugal)

Period: 2016-2019

Diabetic Retinopathy (DR) is a leading cause of blindness in the industrialized world that can be avoided with early treatment, demanding an earlier diagnosis in a stage where the treatment is still possible and effective. DR evolves silently without any visual symptoms, during the early stages of the disease.

Under this context, the vision of the consortium SCREEN-DR is to create a distributed and automatic screening platform for DR, based on the state-of-the-art Information and Communication Technologies (ICT), including advanced Picture Archiving and Communication Systems (PACS) management, Machine Learning and Image Analysis, enabling immediate response from health carers, allowing accurate follow-up strategies, and fostering technological innovation.

About

AboutA survey on data compression methods for:

- protein sequences

- genomic sequences:

- reference-free

- reference-based

- specific formats:

- FASTA

- FASTQ

- SAM/BAM

Citation

CitationM. Hosseini, D. Pratas, A. J. Pinho. “A survey on data compression methods for biological sequences.” Information 7.4 (2016): 56.

Download

DownloadRicardo Filipe Gonçalves Ribeiro, “TASKA: A modular and easily extendable system for repeatable workflows”

23 Mai, 14.30 pm

About

AboutGeCo is a method and tool designed for the compression and analysis of genomic data. As a compression tool, GeCo is able to provide additional compression gains over several top specific tools in different levels of redundancy. As an analysis tool, GeCo is able to determine absolute measures, namely for many distance computations, and local measures, such as the information content contained in each element, providing a way to quantify and locate specific genomic events. GeCo can afford individual compression and referential compression (conditional or conditional exclusive). The tool is memory adjustable, using hash-caches for the deepest context models, making possible to be run in modest computers.

Citation

CitationD. Pratas, A. J. Pinho, P. J. S. G. Ferreira. Efficient compression of genomic sequences. Proc. of the Data Compression Conference, DCC-2016, Snowbird, UT, March 2016. (accepted)

Download

DownloadLuis Bastião Silva, “A federated architecture for biomedical data integration”

Universidade de Aveiro, DETI/IEETA

About

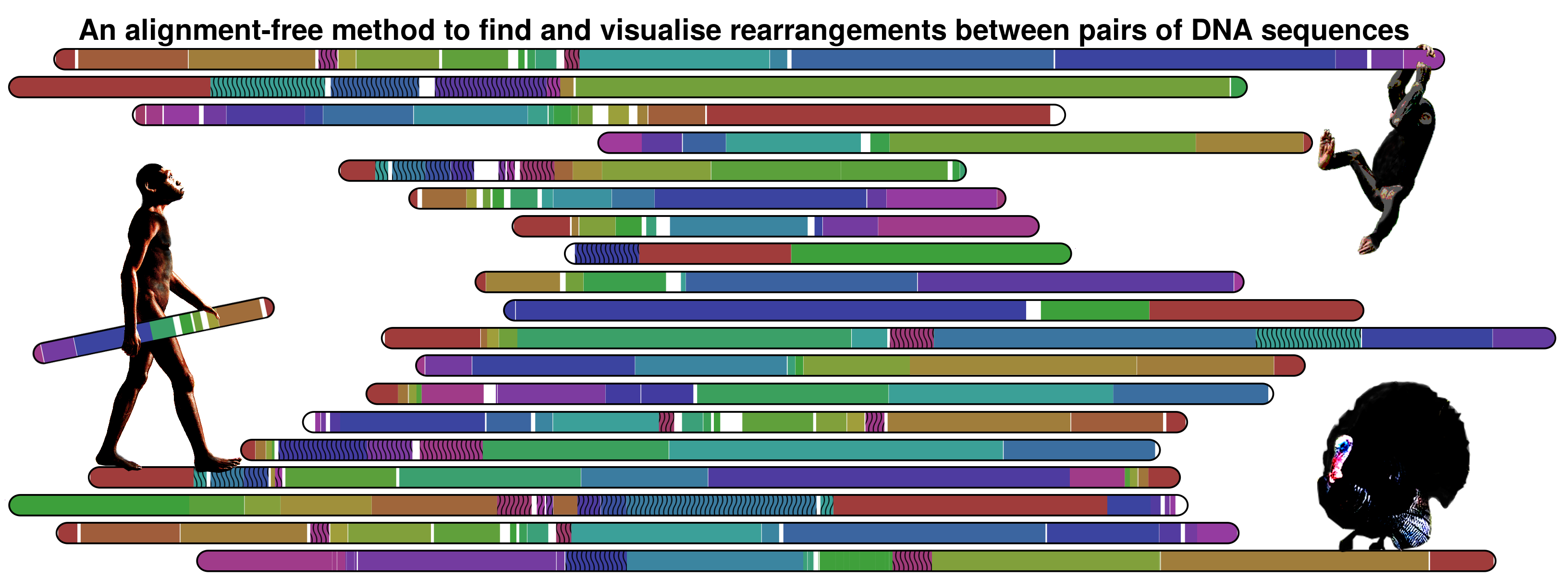

About

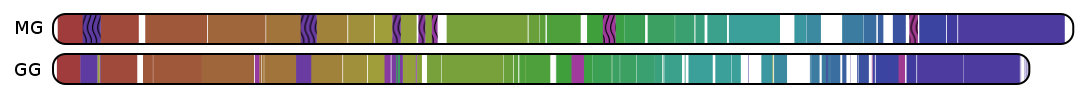

Nevertheless, the method is not limited to primates information. The following image show the information map between Meleagris gallopavo and Gallus gallus chromosomes 1 using a threshold of 0.95.

Download

Download Citation

CitationCarlos Ferreira, “Handling Data Access Latency in Distributed Medical Imaging Environments”

Universidade de Aveiro, DETI/IEETA

Date: 2015.04.10, 10.00 AM

Anfiteatro, Reitoria, Universiade de Aveiro

EAGLE: alignment-free method to compute relative absent words (RAWs)

EAGLE: alignment-free method to compute relative absent words (RAWs)

About

AboutEAGLE is an alignment-free method and associated program to compute relative absent words (RAW) in genomic sequences using a reference sequence. Currently, EAGLE runs on a command line linux environment, building an image with patterns reporting the absent words regions (in SVG) as well as reporting the associated positions into a file. EAGLE has got scripts to run on the current outbreak and the other existing ebola virus genomes (using the human as a reference), including the download, filtering and processing of the entire data.

Citation

CitationRaquel M. Silva, Diogo Pratas, Luísa Castro, Armando J. Pinho & Paulo J. S. G. Ferreira. Bioinformatics (2015): btv189.

DOI: 10.1093/bioinformatics/btv189.

Download

DownloadPaulo Gaspar, “Computational methods for gene characterization and genome knowledge extraction”

Universidade de Aveiro, DETI/IEETA

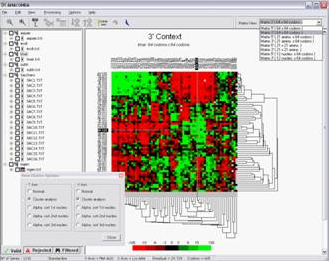

MENT: Microarray comprEssiOn Tools

MENT: Microarray comprEssiOn Tools

About

AboutMENT is a set of tools for lossless compression of microarray images, however, it can be used in other kind of images such as medical, RNAi, etc. This set of tools is divided into two categories, defined by the decomposition approach used:

- Bitplane Decomposition

- Binary-Tree Decomposition

In what follows, we will describe the set of tools available in MENT:

- BOSC06 (Bitplane decOmpoSition Compressor 2006) – Lossless compression tool for microarray images introduced by Neves and Pinho in 2006 (Neves 2006). This tool uses an image-INDEPENDENT context configuration and arithmetic coding.

- BOSC09 (Bitplane decOmpoSition Compressor 2009) – Lossless compression tool for microarray images introduced by Neves and Pinho in 2009 (Neves 2009). This tool uses an image-DEPENDENT context configuration and arithmetic coding.

- BOSC09HC (Bitplane decOmpoSition Compressor 2009 using Histogram Compaction) – Tool inspired on method introduced by (Neves 2009) where it was added an Histogram Compaction unit in order to remove some redundant bitplanes. This Histogram Compaction is usefull for images that have a reduced number of intensities.

- BOSC09SBR (Bitplane decOmpoSition Compressor 2009 using Scalable Bitplane Reduction) – Tool inspired on method introduced by (Neves 2009) where it was added an Scalable Bitplane Reduction unit in order to remove some redundant bitplanes. The Scalable Bitplane Reduction technique was first introduced by Yoo 1999.

- SBC (Simple Bitplane Coding) – Tool inspired on one of Kikuchi’s work (Kikuchi 2009, Kikuchi 2012).

- BOSC09MixSBC (Bitplane decOmpoSition Compressor 2009 Mixture with Simple Bitplane Coding) – Tool based on a mixture of finite-context models. In this particular case, we only considered two different models. The first one used by Neves and Pinho (Neves 2009) and the other one based on a Simple Bitplane Coding inspired on Kikuchi’s work (Kikuchi 2009, Kikuchi 2012).

- BITTOC (Binary Tree decomposiTiOn Compressor) – Tool inspired on Chen’s work regarding compression of color-quantized images (Chen 2002). This tool performance was studied in the context of medical images by Pinho and Neves in 2009 (Pinho 2009) and more recently applied to microarray images (Matos 2014).

- CmpImgs (Compare Images) – An image comparasion tool.

Microarray image sets

Microarray image sets- ApoA1 (32 images | 66.4MB | download)

- Arizona (6 images | 694.9MB | download)

- IBB (44 images | 1.03GB | download)

- ISREC (14 images | 26.7MB | download)

- Omnibus – Low Mode (25 images | 2.5GB | download)

- Omnibus – High Mode (25 images | 2.5GB | download)

- Stanford (40 images | 396MB | download)

- Yeast (109 images | 219MB | download)

- YuLou (3 images | 40.7MB | download)

Citation

CitationIf you use some tool from MENT, please cite the following publications:

- Luís M. O. Matos, António J. R. Neves, Armando J. Pinho, “Lossy-to-lossless compression of biomedical images based on image decomposition”,in Applications of Digital Signal Processing through Practical Approach, Sudhakar Radhakrishnan (Editor), InTech, pp. 125-158, October 2015. DOI: doi.org/10.5772/60650

- Luís M. O. Matos, António J. R. Neves, Armando J. Pinho, “A rate-distortion study on microarray image compression”, in Proceedings of the 20th Portuguese Conference on Pattern Recognition, RecPad 2014, Covilhã, Portugal, October 2014. DOI: doi.org/10.13140/2.1.3431.2969

- Luís M. O. Matos, António J. R. Neves, Armando J. Pinho, “Compression of microarrays images using a binary tree decomposition”, in Proceedings of the 22nd European Signal Processing Conference, EUSIPCO 2014, Lisbon, Portugal, September 2014. DOI: doi.org/10.13140/2.1.1980.5761

- Luís M. O. Matos, António J. R. Neves, Armando J. Pinho, “Compression of DNA microarrays using a mixture of finite-context models”, in Proceedings of the 18th Portuguese Conference on Pattern Recognition, RecPad 2012, Coimbra, Portugal, October 2012. DOI: doi.org/10.13140/2.1.1061.8245

- Luís M. O. Matos, António J. R. Neves, Armando J. Pinho, “Lossy-to-lossless compression of microarrays images using expectation pixel values”, in Proceedings of the 17th Portuguese Conference on Pattern Recognition, RecPad 2011, Porto, Portugal, October 2011. DOI: doi.org/10.13140/2.1.3553.4403

- Luís M. O. Matos, António J. R. Neves, Armando J. Pinho, “Lossless compression of microarrays images based on background/foreground separation”, in Proceedings of the 16th Portuguese Conference on Pattern Recognition, RecPad 2010, Vila Real, Portugal, October 2010. DOI: doi.org/10.13140/2.1.3815.5843

- António J. R. Neves, Armando J. Pinho, “Lossless compression of microarray images using image-dependent finite-context models”, in IEEE Transactions on Medical Imaging, volume 28, number 2, pages 194-201, February 2009. DOI: dx.doi.org/10.1109/TMI.2008.929095

- António J. R. Neves, Armando J. Pinho, “Lossless Compression of Microarray Images”, in Proceedings of the IEEE International Conference on Image Processing, ICIP-2006, Atlanta, GA, pages 2505-2508, 8-11 October, 2006. DOI: dx.doi.org/10.1109/ICIP.2006.31280

Download

Download XS: a FASTQ read simulator

XS: a FASTQ read simulator

About

AboutXS is a skilled FASTQ read simulation tool, flexible, portable (does not need a reference sequence) and tunable in terms of sequence complexity. XS handles Ion Torrent, Roche-454, Illumina and ABI-SOLiD simulation sequencing types. It has several running modes, depending on the time and memory available, and is aimed at testing computing infrastructures, namely cloud computing of large-scale projects, and testing FASTQ compression algorithms. Moreover, XS offers the possibility of simulating the three main FASTQ components individually (headers, DNA sequences and quality-scores). Quality-scores can be simulated using uniform and Gaussian distributions.

Citation

CitationPratas, D., Pinho, A. J., & Rodrigues, J. M. R. (2014). XS: a FASTQ read simulator. BMC research notes, 7(1), 40.

Web download

Web download Download and install from console

Download and install from consoleunzip master.zip

cd xs-master

make

Or alternatively:

wget https://bioinformatics.ua.pt/wp-content/uploads/2014/02/XS.tar.gz

tar -vzxf XS.tar.gz

cd XS

make

SACO: a lossless compression tool for the sequences alignments found in the MAF files.

SACO: a lossless compression tool for the sequences alignments found in the MAF files.

About

AboutSACO was designed to handle the DNA bases and gap symbols that can be found in MAF files. Our method is based on a mixture of finite-context models. Contrarily a recent approach, it addresses both the DNA bases and gap symbols at once, better exploring the existing correlations. For comparison with previous methods, our algorithm was tested in the multiz28way dataset. On average, it attained 0.94 bits per symbol, approximately 7% better than the previous best, for a similar computational complexity. We also tested the model in the most recent dataset, multiz46way. In this dataset, that contains alignments of 46 different species, our compression model achieved an average of 0.72 bits per MSA block symbol.

Data sets

Data sets Citation

CitationIf you use this software, please cite the following publications:

- Luís M. O. Matos, Diogo Pratas, and Armando J. Pinho, “A Compression Model for DNA Multiple Sequence Alignment Blocks”, in IEEE Transactions on Information Theory, volume 59, number 5, pages 3189-3198, May 2013. DOI: dx.doi.org/10.1109/TIT.2012.2236605

- Luís M. O. Matos, Diogo Pratas, and Armando J. Pinho, “Compression of whole genome alignments using a mixture of finite-context models”, in Proceedings of the International Conference on Image Analysis and Recognition, ICIAR 2012, (Editors: A. Campilho and M. Kamel, volume 2324 of Lecture Notes in Computer Science (LNCS)), pages 359-366, Springer Berlin Heidelberg, Aveiro, Portugal, June 2012. DOI: doi.org/10.1007/978-3-642-31295-3_42

Download

Download MAFCO: a compression tool for MAF files

MAFCO: a compression tool for MAF files

About

AboutMAFCO is a lossless compression tool specifically designed to compress MAF (Multiple Alignment Format) files. Compared to gzip, the proposed tool attains a compression gain from ≈ 34% to ≈ 57%, depending on the data set. When compared to a recent dedicated method, which is not compatible with some data sets, the compression gain of MAFCO is about 9%. MAFCO was designed and implemented at IEETA, a research unit of the University of Aveiro, and is available for non-commercial use.

Citation

CitationLuís M. O. Matos, António J. R. Neves, Diogo Pratas and Armando J. Pinho. “MAFCO: a compression tool for MAF files”. PLoS ONE 10(3): e0116082.

DOI: http://dx.doi.org/10.1371/journal.pone.0116082.

Download

DownloadSystems that aggregate health and entertainment goals are proliferating, but little is known about the way to design and evaluate these systems and how to manage the different (if nor opposite) needs of these two main areas. This workshop will promote the discussion of issues surrounding these areas, enabling a better understanding of the how’s and why’s of designing systems for health and entertainment, as well as the identification of new avenues of research in the field.

Therefore we invite designers, researchers and practitioners to participate in an exciting full-day workshop where they are invited to share their personal views and research on the intersection of technology, health and entertainment.

More information at http://designingsystemsforhealthandentertainment.wordpress.com/.

The 6th International Symposium on Semantic Mining in Biomedicine (SMBM)

6th-7th October, 2014 will be held at the University of Aveiro, Portugal.

SMBM aims to bring together researchers from text and data mining in biomedicine, medical, bio- and chemoinformatics, and researchers from biomedical ontology design and engineering. SMBM 2014 is the follow-up event to SMBM 2012 (University of Zürich, Switzerland) SMBM 2010 (EBI, U.K.), SMBM 2008 (University of Turku, Finland), SMBM 2006 (University of Jena, Germany), and SMBM 2005 (EBI, U.K.).

More information at http://www.smbm.org.

Luis Ribeiro, “Platform for on-demand exchange of medical imaging communities”

Universidade de Aveiro, DETI/IEETA

David Campos, “Term expansion methodologies in biomedical information retrieval”

Universidade de Aveiro, DETI/IEETA

The FCT Investigator Programme aims to create a talent base of scientific leaders, by providing 5-year funding for the most talented and promising researchers, across all scientific areas and nationalities.

For the 2013 call, Sérgio Matos, research assistant at IEETA, was awarded a FCT Investigator grant, for the 2014-2018 period.

About

AboutFALCON is an alignment-free unsupervised system to measure a similarity top of multiple reads according to a database. The machine learning system can be used, for example, to classify metagenomic samples. The core of the method is based on the relative algorithmic entropy, a notion that uses model-freezing and exclusive information from a reference, allowing to use much lower computational resources. Moreover, it uses variable multi-threading, without multiplying the memory for each thread, being able to run efficiently from a powerful server to a common laptop. To measure the similarity, the system will build multiple finite-context (Markovian) models that at the end of the reference sequence will be kept frozen. The target reads will then be measured using a mixture of the frozen models. The mixture estimates the probabilities assuming dependency from model performance, and thus, it will allow to adapt the usage of the models according to the nature of the target sequence. Furthermore, it uses fault tolerant (substitution edits) Markovian models that bridge the gap between context sizes. Several running modes are available for different hardware and speed specifications. The system is able to automatically learn to measure similarity, whose properties are characteristics of the Artificial Intelligence field.

Citation

CitationPaper was submitted, currently the citation should be addressed to the url (bioinformatics.ua.pt/software/falcon).

Download

Download DNAatGlance is a program for the detection of large-scale genomic regularities by visual inspection. Several discovery strategies are possible, including the standalone analysis of single sequences, the comparative analysis of sequences from individuals from the same species, and the comparative analysis of sequences from different organisms. The software was designed and implemented at IEETA, a research unit of the University of Aveiro, and is available for non-commercial use.

DNAatGlance is a program for the detection of large-scale genomic regularities by visual inspection. Several discovery strategies are possible, including the standalone analysis of single sequences, the comparative analysis of sequences from individuals from the same species, and the comparative analysis of sequences from different organisms. The software was designed and implemented at IEETA, a research unit of the University of Aveiro, and is available for non-commercial use.

Citation

CitationArmando J. Pinho, Sara P. Garcia, Diogo Pratas, Paulo J. S. G. Ferreira (2013) DNA Sequences at a Glance. PLoS ONE 8(11): e79922.

DOI: dx.doi.org/10.1371/journal.pone.0079922.

Download

DownloadFor convenience, we provide a sequence (here in gzip)(here in zip) and the corresponding information profile in WIG format (here in gzip) (here in zip) that can be uploaded to the UCSC Genome Browser as a custom track.

MFCompress: a compression tool for FASTA and multi-FASTA data

About

AboutMFCompress is a compression tool for FASTA and multi-FASTA files. In comparison to gzip and applied to multi-FASTA files, MFCompress can provide additional average compression gains of almost 50%, i.e., it potentially doubles the available storage, although at the cost of some more computation time. On highly redundant data sets, and in comparison with gzip, 8-fold size reductions have been obtained. MFCompress was designed and implemented at IEETA, a research unit of the University of Aveiro, and is available for non-commercial use. For other uses, please send an email to ap@ua.pt.

Citation

CitationArmando J. Pinho, and Diogo Pratas. “MFCompress: a compression tool for FASTA and multi-FASTA data.” Bioinformatics 30.1 (2014): 117-118.

DOI: dx.doi.org/10.1093/bioinformatics/btt594.

Download

Download

Available at https://bioinformatics.ua.pt/egas.

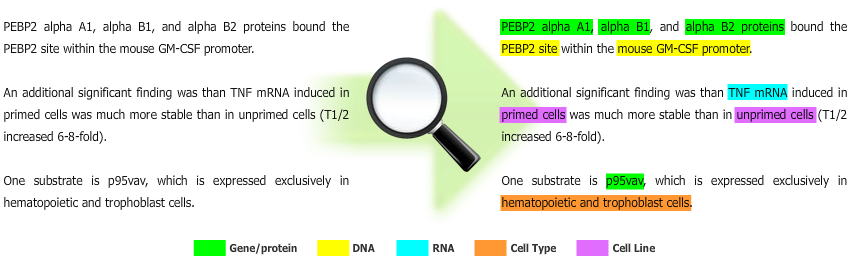

What?

Egas is a web-based platform for biomedical text mining and collaborative curation. The web tool allows users to annotate texts with concept occurrences as well as with relations between concepts. Annotations can be performed manually or based on the results of automated concept identification and relation extraction tools. These automatic annotations may have been previously added to the documents, using one of the accepted input formats, or may be added during the annotation process, by calling a document annotation service. Users can inspect, correct or remove automatic text mining results, manually add new annotations, and export the results to standard formats.

How?

Text-processing and fetching modules, such as the concept and relation annotation services, were implemented in Java, and the web interface was developed using HTML5, CSS3, and JavaScript, in order to allow fast processing of large documents and support mobile devices. The resulting information is stored in a relational database. Finally, all database operations are performed using secured RESTful web-services, allowing easy integration with mobile devices, such as smartphones and tablets.

Funding entity: QREN MaisCentro

Period: Feb.2013 – Dec.2014

The projects’ ambition is the creation of a new set of solutions based in novel ICT technologies, developing a concept that encompasses the synergistic usage of cloud computing, with large database access and information retrieval, associated with advanced methods for reasoning and data mining (and with the basic scalable algorithms to support the dimensions of the data sets targeted).

Funding entity: QREN MaisCentro

Period: Feb.2013 – Jun.2015

Neurodegenerative disorders are a major health concern worldwide, Portugal being no exception. With this project the University of Aveiro proposes extend existing research in the field of neurodegenerative diseases through the creation of a consortium of 5 research units from UA (CBC, QOPNA, I3N, IEETA, CICECO). The projects main goal is to offer novel therapeutic strategies to tackle the complex array of existing neuropathologies. By building a multidisciplinary research team that combines experts in molecular neuropathologies, proteomics, metabolomics, bioinformatics, neuronal networks, organic synthesis and drug design from the UA we will be able to attack the problem on many fronts. Upon successful completion of this project, new therapeutic approaches will have been developed which will contribute to the improvement of life quality for neurodegenerative patients, having a high society impact considering the 10 million new patients reported every year.

The price was awarded at BioLINK SIG 2013 for the work “Neji: a tool for heterogeneous biomedical concept identification”.

BioLINK SIG 2013: Roles for text mining in biomedical knowledge discovery and translational medicine

The Annual Meeting of the ISMB BioLINK Special Interest Group

In Association with ISMB/ECCB 2013, Berlin, Germany

July 20, 2013

A 6-hour iOS Development Seminar will be held by Rui Pedro Lopes, Professor at Polytechnic Institute of Brangança, on the 29th July 2013, at Department of Electronics, Telecommunications and Informatics (DETI), Aveiro.

This Seminar will cover the following main topics: Objective-C, Storyboards, Core Data, Master-Detail User Interface

Universidade de Aveiro, DETI, Room 102, 10h

Exploring Human Genetic Variations

Exploring Human Genetic Variations

About

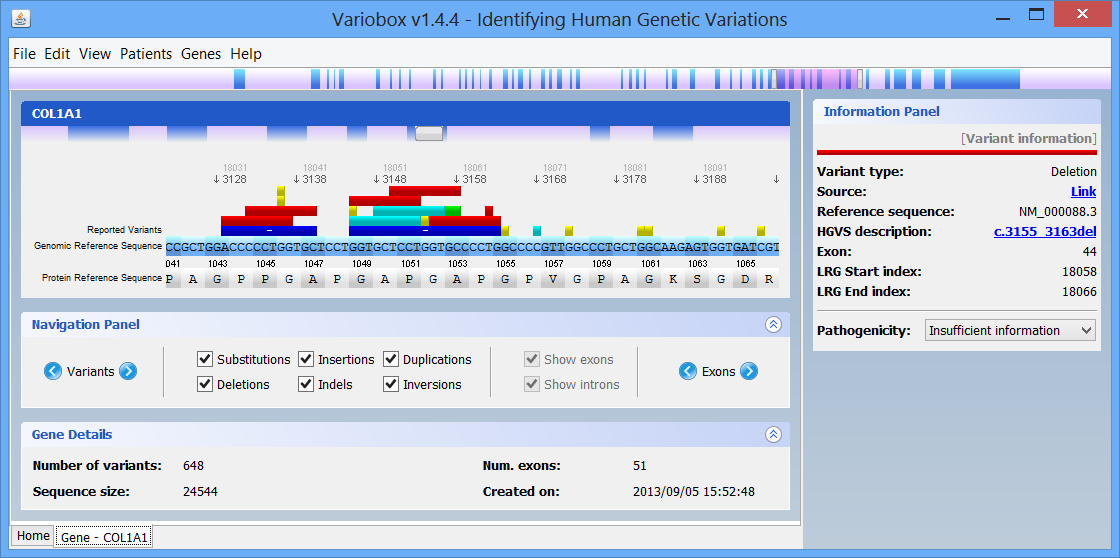

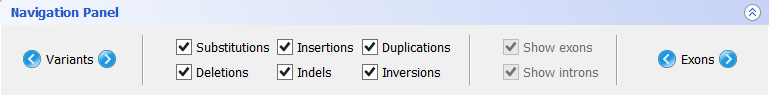

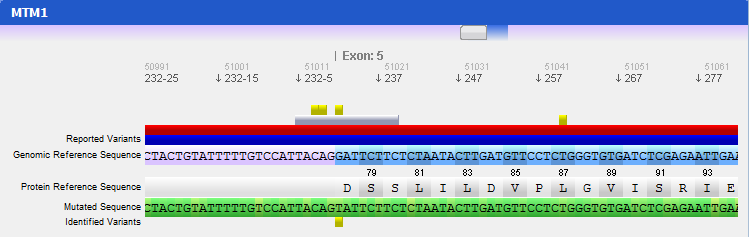

AboutVariobox is a desktop tool for the annotation, analysis and comparison of human genes. Variant annotation data are obtained from WAVe, protein metadata annotations are gathered from PDB and UniProt, and sequence metadata is obtained from Locus Reference Genomic (LRG) and RefSeq databases. By using an advanced sequence visualization interface, Variobox provides an agile navigation through the various genetic regions. Researched genes are compared to the sequences retrieved from LRG and RefSeq, automatically finding and annotating new potential mutations. These features and data, ranging from patient sequences to HGVS-valid variant description up to pathogenicity evaluation, are combined in an intuitive interface to explore genes and mutations.

Citing

CitingTo cite this tool use the following publication:

Variobox: Automatic Detection and Annotation of Human Genetic Variants. Paulo Gaspar, Pedro Lopes, Jorge Oliveira, Rosário Santos, Raymond Dalgleish, José Luís Oliveira. Human Mutation, 2014

Download

DownloadVarioBox is available for all the main operating systems (Windows [XP, 7, 8]; Linux; MacOS) that support Java. The current version of the software is 1.4.4. Click the link bellow to download:

To run, first unpack all the files to any folder. Then, if you’re on Windows, double click the Variobox file inside the folder. On Mac or Linux, start a terminal, change the directory to the created folder, and run java -jar variobox.jar

Tutorial

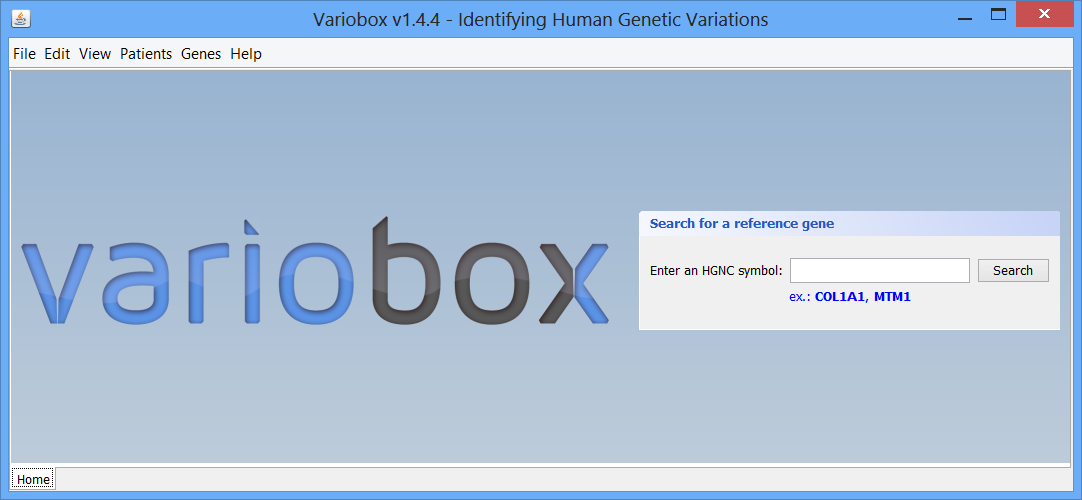

Tutorial Step 1 The initial layout

Step 1 The initial layout This is the initial VarioBox workspace that shows up when you open the application. At the bottom of the workspace you can find a separator, “Home”, created automatically. Here will be as many separators as searches performed, each one identified by the searched HGNC code. At the centre you can see the logo and a panel, where searches for reference genes can be performed, using a valid HGNC symbol. To work with Variobox, a reference gene is always the starting point. After obtaining the reference, a sequence can be loaded to the application to be aligned with the sequence, and analysed.

This is the initial VarioBox workspace that shows up when you open the application. At the bottom of the workspace you can find a separator, “Home”, created automatically. Here will be as many separators as searches performed, each one identified by the searched HGNC code. At the centre you can see the logo and a panel, where searches for reference genes can be performed, using a valid HGNC symbol. To work with Variobox, a reference gene is always the starting point. After obtaining the reference, a sequence can be loaded to the application to be aligned with the sequence, and analysed.

Step 2 Making a quick search

Step 2 Making a quick searchBy default there are two genes bellow the search box: Collagen, type I, alpha 1 (COL1A1), and Myotubularin 1 (MTM1). Click on COL1A1 or type it at the search box and hit search. A progress bar will show up indicating the progress of the loading process. A new tab (with the name of the searched HGNC code), like the one below, will show up once the reference gene is automatically retrieved from the web servers:

The right zone is formed by two distinct panels:

- The top one, titled Protein Viewer is where the 3D protein conformation of the selected gene is shown, if available, using JMol.

- The bottom one, titled Information Panel, which will display additional information on selected items, such as mutations and exons.

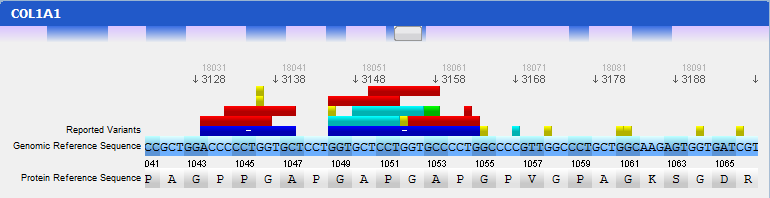

On the top of the window there is a large genomic viewer with a movable and resizable window that allows specifying a region to be explored in the centre zone. This viewer distinguishes exons (blue) and introns (purple), and allows quickly jumping through the gene. The centre zone is populated with gene data and information, in three distinct panels, described below:

- Gene panel

In this panel you can see the codon sequence and the decoded polypeptide sequence, labelled Reference Sequence and Translated Sequence respectively, and also the Known Mutations for the gene, as retrieved from WAVe. A zoomed genomic viewer is also displayed to further facilitate the exploration of the gene.

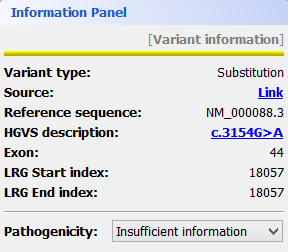

Mutations are identified by different colours, and shown next to the corresponding nucleotides. Additional information about a mutation can be obtain by clicking on the mutation. The Information Panel (right side of the workspace) will display details regarding the selected mutation’s position, source, type, annotation, etc.

- Navigation panel

The Navigation Panel also permits filtering what mutation types are to be shown in the Gene Panel. For instance, if you only check Substitutions, all mutations besides SNPs will be hidden.

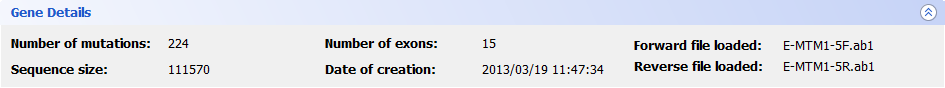

- Gene Details panel

This panel shows you a quick information about the gene that you are analysing. The current information supported is the following:

- Number of mutations: displays the total number of mutations found in the reference gene. No information will be displayed if no mutations are known;

- Number of exons: total number of exons found in the gene;

- Sequence size: total size of the reference sequence;

- Date of creation: the date and time when this gene was created;

- Loaded files: the files that were selected by the user to be aligned with the reference sequence.

Step 3 Loading mutated sequences

Step 3 Loading mutated sequencesTo load a gene sequence and align it with the reference gene, click the menu Genes → Load gene file. Alternatively, go to the menu File → Load gene file. You will be prompted with a new window to select the file you want to load. For the current version we support the file types:

- DNA Sequence Chromatogram File: .scf ; .abi extension

- DNA Electropherogram File: .ab1 extension

- FASTA files: .fasta ; .fa extension

After selecting the file (or files, if you choose the forward-reverse format), click Load selected file and VarioBox will read them. Once the file is correctly loaded, an alignment with the reference gene is automatically performed. This alignment will also display found mutations, as compared to the reference gene. The analysis of the loaded sequence is described in the next step.

Step 4 Analysing mutated sequences and saving results

Step 4 Analysing mutated sequences and saving resultsAfter the files are loaded, the Gene Panel will be updated with the mutated sequence as well as the calculated mutations, as depicted in the following figure:

The loaded sequence will also be coloured according to its chromatogram confidence (if there is one), ranging from green (high confidence) to red (no confidence). This will allow easily understanding the validity of calculated mutations. Also note that the mutations are automatically annotated using the standard notation, and its annotation is displayed when clicking on a mutation. To save the sequences, mutations, alignment and other information, the gene should be assigned to a patient. To do so, go to the menu Genes → Save to patient and select a patient from the list of patients that will be presented.

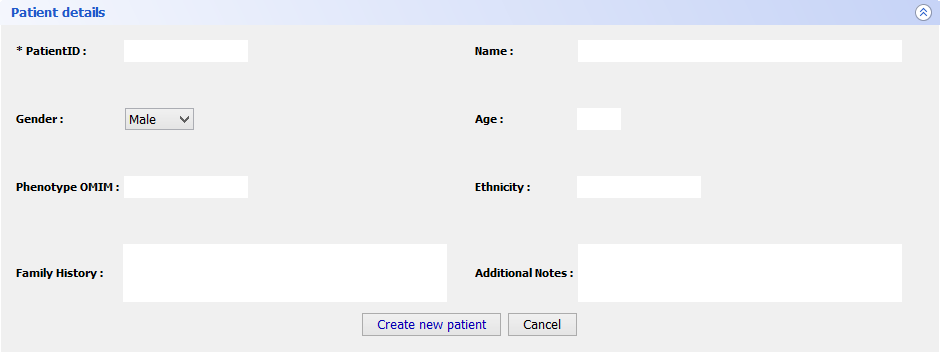

Step 5 Final Features

Step 5 Final FeaturesIf you want to register a new patient in VarioBox, make the following steps: Go to Patients → New patient and fill the Patient Details panel (shown bellow) with all the required information(note that only one field is mandatory). After that just click Save patient and a new record will be created.

To load a saved project, go to Patients → Open patient and select the patient you previously saved. This will create a new tab with all the patient information: patient personal information as well as the genes from that patient. Those genes can be open just by selecting them and clicking Open selected.

This action will open many tabs as many genes you have selected and will re-create all the gene panels you had in the workspace previously.

Closing tabs is as simple as going to Patients → Close patient or Genes → Close current gene project depending of the tab type you have open.

Biomedical Concept Annotation Tool, API and Widget

About

About

becas is a web application, API and widget for biomedical concept identification. It helps researchers, healthcare professionals and developers in the identification of over 1,200,000 biomedical concepts in text and PubMed abstracts.

becas provides annotations for isolated, nested and intersected entities. It identifies concepts from multiple semantic groups, providing preferred names and enriching them with references to public knowledge resources. You can choose the types of entities you want to identify and highlight or mute specific entities in real-time.

To facilitate annotation of PubMed abstracts, becas automatically fetches publications from NCBI servers and renders them with identified concepts highlighted.

Using becas

Using becas

You can access the becas web annotation tool here and learn to use it in its help page. Explore the Web API in the API docs and discover how easy it is to integrate the becas widget in the widget docs.

You can read more about becas in the about page and we would love to hear your feedback!

Diseasecard is a public web portal that integrates real-time information from distributed and heterogeneous medical and genomic databases, presenting it in a familiar visual paradigm.

Bioinformatics is playing a key role on molecular biology advances, not only by enabling new methods of research, but also managing the huge amounts of relevant information and make it available world-wide.

State of the art methods on bioinformatics include the use of public databases to publish the scientific breakthroughs. These databases provide valuable knowledge for the medical practice. But, given their specificity and heterogeneity, we cannot expect the medical practitioners to include their use in routine investigations. To obtain a real benefic from them, the clinician needs integrated views over the vast amount of knowledge sources, enabling a seamless querying and navigation.

Goals

Main goals behind the conception of DiseaseCard:

- Provide the user with an integrated view of the information available in the internet for a specific disease, from the phenotype to the genotype.

- Use rare diseases as the main target due to the high association between phenotype and genotype.

- Do not replicate information that already exists in public or private databases. The system is based in an information model that allows accessing and sharing these data;

- Be supported in a navigation protocol that allows guiding users in the process of retrieving information from the Internet.

Results

Diseasecard can provide the answers to several questions that are relevant in the genetic diseases diagnostic, treatment and accomplishment, such as:

- What are the main features of the disease?

- Are there any drugs for the disease?

- Are there any gene therapies for the disease?

- What laboratories perform genetic tests for the disease?

- What genes cause the disease?

- On which chromosomes are these genes located?

- What mutations have been found in these genes?

- What names are used to refer to these genes?

- What are the proteins coded by these genes?

- What are the functions of the gene product?

- What is the 3D structure for these proteins?

- What are the enzymes associated to these proteins?

Publications

- G. Dias, J. L. Oliveira, F. Vicente, and F. Martín-Sanchez, “Integrating Medical and Genomic Data: a Sucessful Example for Rare Diseases”, in The XX International Congress of the European Federation for Medical Informatics (MIE’2006), Maastricht, Netherlands, 2006.

- G. Dias, J. L. Oliveira, F. Vicente, and F. Martin-Sanchez, “Integration of Genetic and Medical Information Through a Web Crawler System”, in Biological and Medical Data Analysis (ISBMDA’ 2005), Lecture Notes in Computer Science – Volume 3745, Aveiro, Portugal, 2005.

About

AboutNCCD is a method and package tool designed to compute the NCCD (Normalized Conditional Compression Distance) and, for instance, to perform phylogenomics (whole genome) on 48 bird species. It will use a state-of-the-art genomic compressor, based on a mixture of finite-context models, as a metric distance.

Citation

CitationD. Pratas, A. J. Pinho. “A conditional compression distance that unveils insights of the genomic evolution.” arXiv preprint arXiv:1401.4134 (2014).

Download

Download

The EU-ADR Web Platform helps experts in the study of adverse drug reactions (ADRs) through the use of computational services and scientific workflows, provided by several European partners. The system assists in the earlier detection of adverse drug reactions, improving drug safety and contributing to public health benefit. You can access the EU-ADR Web Platform here

EU-ADR Project

The overall objective of this project was the design, development and validation of a computerized system that exploits data from electronic healthcare records and biomedical databases for the early detection of adverse drug reactions. Visit the project page.

Talk from Luís Paquete, Anf. IEETA

The multiobjective formulation of the pairwise sequence alignment problem is introduced, where a vector score function takes into account the substitution score and indels or gaps separately. Two solution methods are introduced: a multiobjective dynamic programming that extends classical algorithms for this problem and an epsilon-constraint algorithm that solves a series of constrained sequence alignment problems. A state pruning technique based on the concept of bound sets is also presented. Finally, its application to phylogenetic tree construction is

discussed.

Universidade de Aveiro, Anf. IEETA, 14h30

Funding entity: IMI-JU

Period: 2013-2018

In recent years, the development and use of Electronic Healthcare Records (EHRs) throughout Europe has grown exponentially resulting in large volumes of clinical data. At the same time, large collections of disease‐specific data are recorded – in local, regional and/or national settings. Researchers also follow specific cohorts over time, and focus on specific types of data such as imaging or genetic data. Other researchers are building biobanks that aim to combine clinical data with genetic data. As a result, individual patients can contribute to multiple, often separate, data sources.

Funding entity: FP7-HEALTH-2012-INNOVATION-1

Period: 2012-2018

Despite examples of excellent practice, rare disease (RD) research is still mainly fragmented by data and disease types. Individual efforts have little interoperability and almost no systematic connection between detailed clinical and genetic information, biomaterial availability or research/trial datasets. By developing robust mechanisms and standards for linking and exploiting these data, RD-Connect will develop a critical mass for harmonisation and provide a strong impetus for a global “trial-ready” infrastructure ready to support the IRDiRC goals for diagnostics and therapies for RD patients.

Flexible, easy and powerfull framework for faster biomedical concept recognition.

Download Learn more

What?

Neji is an innovative framework for biomedical concept recognition. It is open source and built around four key characteristics: modularity, scalability, speed, and usability. It integrates modules of various state-of-the-art methods for biomedical natural language processing (e.g., sentence splitting, tokenization, lemmatization, part-of-speech tagging, chunking and dependency parsing) and concept recognition (e.g., dictionaries and machine learning). The most popular input and output formats, such as Pubmed XML, IeXML, CoNLL and A1, are also supported. Additionally, the recognized concepts are stored in an innovative concept tree, supporting nested and intersected concepts with multiples identifiers. Such structure provides enriched concept information and gives users the power to decide the best behavior for their specific goals, using the included methods for handling and processing the tree.

Why?

Concept recognition is an essential task in biomedical information extraction, presenting several complex and unsolved challenges. The development of such solutions is typically performed in an ad-hoc manner or using general information extraction frameworks, which are not optimized for the biomedical domain and normally require the integration of complex external libraries and/or the development of custom tools. Thus, Neji fills the gap between general frameworks (e.g., UIMA and GATE) and more specialized tools (e.g., NER and normalization), streamlining and facilitating complex biomedical concept recognition.

How?

On top of the built-in functionalities, developers and researchers can implement new processing modules or pipelines, or use the provided command-line interface tool to build their own solutions, applying the most appropriate techniques to identify names of various biomedical entities. Neji was built thinking on different development configurations and environments: a) as the core framework to support all developed tasks; b) as an API to integrate in your favorite development framework; and c) as a concept recognizer, storing the results in an external resource, and then using your favorite framework for subsequent tasks.

Universidade de Aveiro, Anf. Ambiente, 14h

Pedro Lopes, “Service Composition in Biomedical Applications”

Universidade de Aveiro, DETI/IEETA

Dr. Kim Sneppen from the Niels Bohr Institute, Copenhagen-DK, will give the give the inaugural Lecture of our Systems Biology seminars series entitled Simplified Models of Biological Networks, on the 28th of September.

Universidade de Aveiro, Anf. Ambiente, 14h

Redesign mRNA sequences to optimise the secondary structure

Redesign mRNA sequences to optimise the secondary structure

About

AboutThe mRNA optimiser is a tool that redesigns a gene messenger RNA to optimise its secondary structure, without affecting the polypeptide sequence. The tool can either maximize or minimize the molecule minimum free energy (MFE), thus resulting in decreased or increased secondary structure strength.

The optimisation is achieved by using an heuristic to look for synonymous gene sequences, and select the ones with the best secondary structure. Evaluations of the secondary structure are made using a correlated stem-loop prediction algorithm that examines the nucleotide sequence for simple stem-loops. This algorithm is fine-tuned to have its results highly correlated with the MFE evaluations of RNAfold.

Our results indicate that an average of over 40% increase in MFE can be obtained with this method. Also, since there is a tendency to reduce the GC percentage of nucleotide sequences when optimising, the developed tool includes an option to maintain the GC content of the wildtype gene.

Citing

CitingP. Gaspar, G. Moura, M. A. S. Santos, and J. L. Oliveira mRNA secondary structure optimization using a correlated stem–loop prediction Nucleic Acids Research, Jan 2013, doi: 10.1093/nar/gks1473

Download

DownloadSelect your operating system:

Current version is 1.0.

Usage

UsageThe mRNA optimiser is a command line tool (a graphical interface will be available soon). To use it you need to open a terminal window, change to the directory where mRNAOptimiser is, and run it:

1. Open a terminal window

- In Windows, go to the Start menu, click Run, write cmd, and click Ok.

- In Mac, write terminal in spotlight and hit enter.

2. Change the directory

- In Windows, Mac and Linux, write cd in the terminal followed by the directory where you placed the tool.

3. Run the mRNA optimiser

- In Windows, write mRNAOptimizer.exe and hit enter. Usage indications will show up in the terminal.

- In Mac and Linux, write java -jar mRNAOptimizer.jar and hit enter. Usage indications will show up in the terminal.

You may choose to supply your mRNA sequence by writing it into the terminal or referring an input file, with the -f input_sequence option. The tool only changes the coding region of the mRNA, therefore you must indicate where the start codon begins (-b index, to indicate the index of the first nucleotide of the start codon) and where the stop codon ends (-e index, to indicate the index of the last nucleotide of the stop codon). The default coding zone is the entire sequence.

To redirect the output results to a file, use the -o output_file option. To choose whether the tool should maximize or minimize the MFE, use the -d type option (default is maximize). You may limit the algorithm in both time and number of iterations by using the options -t max_time and -i max_iterations. Also, the tool will use the standard genetic code by default, but you can select other genetic coding tables using the -c coding_table option.

To maintain the original mRNA percentage of guanine and citosine (GC content) unaltered after optimisation, use the -g option. There is also a quiet mode, where nothing is output except for the resulting sequence, using the -q option.

Any questions and suggestions are welcome 🙂

What is OralCard?

OralCard is an online bioinformatic tool that comprises results from manually curated articles reflecting the oral molecular ecosystem (OralPhysiOme), by merging the experimental information available from the oral proteome both of human (OralOme) and microbial origin (MicroOralOme). OralCard is a key resource for understanding the molecular foundations implicated in biology and disease mechanisms of the oral cavity.

How does it work?

OralCard integrates information about more than 3500 proteins and searching can be performed in three distinct views: (1) by protein names or respective UniProt codes, (2) by disease name, OMIM code or MeSH term, (3) and by organism.

Nuno Rosa, “From the Salivar Proteome to Oralome”

Universidade Católica Portuguesa, Viseu

International School on Semantic Web Applications and Technologies for the Life Sciences 2012

May 2nd – 5th, 2012

Located at the University of Aveiro,

Aveiro, Portugal

More information online at http://www.swat4ls.org/schools/aveiro2012/

Helena Deus, “Linked Data and Semantic Web Technologies for improving discovery in the Life Sciences”

We live in a world of data. This is also true for the Life Sciences, where the introduction of omics technologies such as genome sequencing has led to the industrialization of data production beyond a craft-based cottage industry and into a deluge of biological information. Nevertheless, the apparently simple task of collecting and keeping pace with the latest information about a gene of interest is still thwarted by the need for biological researchers to become experts at database-surfing and literature mining.

Linked Data is a set of principles devised for creating a Web of Data where a new generation of Web applications can discover and link relevant pieces of information based on its properties rather than its location in a database. Linked data is also at the root of a movement towards building a knowledge continuum in the Life Sciences and by doing so, has the potential to be a foundation for a platform that will support 21st century Biology.

In this talk, I will present some of the scenarios where Linked Data has been successfully applied in accelerating scientific discovery and translation of Life Sciences knowledge into Health Care and what challenges are still to be addressed.

Helena Deus Bio at http://lenadeus.info

GReEn: a tool for efficient compression of genome resequencing data.

GReEn: a tool for efficient compression of genome resequencing data.

About

AboutWe describe GReEn (Genome Resequencing Encoding), a tool for compressing genome resequencing data using a reference genome sequence. It overcomes some drawbacks of the recently proposed tool GRS, namely, the possibility of compressing sequences that cannot be handled by GRS, faster running times and compression gains of over 100-fold for some sequences.

GReEn is available for non-commercial use. For other uses, please send an email to ap@ua.pt.

Download

Download Citation

CitationDOI: 10.1093/nar/gkr1124

III WORKSHOP DE RED IBEROAMERICANA DE TECNOLOGÍAS CONVERGENTES NBIC EN SALUD (IBERO-NBIC) – CYTED Program

Hotel Moliceiro, Aveiro, Portugal

October 10-11, 2011

Day 1: Monday, 10

9h00 – 9h30: Opening and Welcome

Boas Vindas

José Luís Oliveira, DETI/IEETA, Universidade de Aveiro, Portugal

Acto de apertura del III Workshop Internacional Redes Ibero-NBIC y NanoRoadmap

Alejandro Pazos & Julián Dorado, Universidade da Coruña, España

9h30 – 11h15:

Vacunología inversa aplicada en malaria

Raúl Isea, IDEA, Fundación de Estudios Avanzados, Venezuela

Bioinformatics, research and applications

Sergio Guíñez Molinos, UCBSM, Universidad de Talca, Chile

Tecnologías NBIC y Nanotoxicidad: Gestión del conocimiento asociado al uso de nanopartículas en medicina

Diana de la Iglesia, GIB, Universidad Politécnica de Madrid, España

Tecnologías de la Información y el Conocimiento en Salud. Un Sistema Basado en Ontologías para el Apoyo a la Toma de Decisión en UCIs

Ana Freire, Universidade de Coruña, España

11h15 – 11h30: Coffee Break

11h30 – 13h00:

Integration of heterogeneous biomedical names taggers

David Campos, DETI/IEETA, Universidade de Aveiro, Portugal

Collecting and Enriching Human Variome Datasets

Pedro Lopes, DETI/IEETA, Universidade de Aveiro, Portugal

Doctoral Program in Nanosciences and Nanotechnology of the University of Aveiro

Tito Trindade, DQ, Universidade de Aveiro, Portugal

13h00 – 14h30: Lunch

14h30 – 16h00:

Integración de la información molecular en un Sistema de Informacion en Salud

Segunda etapa: estándares y control de calidad.

Carlos Otero, HIBA, Buenos Aires, Argentina

Connecting different levels of biological information. From atoms to people

Guillermo López, ISCIII – Instituto de Salud Carlos II, Madrid, España

Posibles aportes de una empresa de Educación Médica Continua a una red de investigación en Salud

Antonio López, EVIMED, Uruguay

16h00 – 16h30: Coffee Break

16h30 – 18h00:

Internal Meeting / Reunión Interna de la Red

Day 2: Tuesday, 11

Visit to Instituto Ibérico de Nanotecnologia (Braga)

Open Source

Open Source- Use, change and distribute

- Social development

High Performance*

High Performance*- BioCreative: 87,54%

- JNLPBA: 73,05%

High-end Techniques

High-end Techniques- Linguistic dependency parsing

- Model combination

Flexible and Scalable

Flexible and Scalable- Extensible architecture

- Fast annotation

Easy to Use

Easy to Use- Automated scripts

- Java library

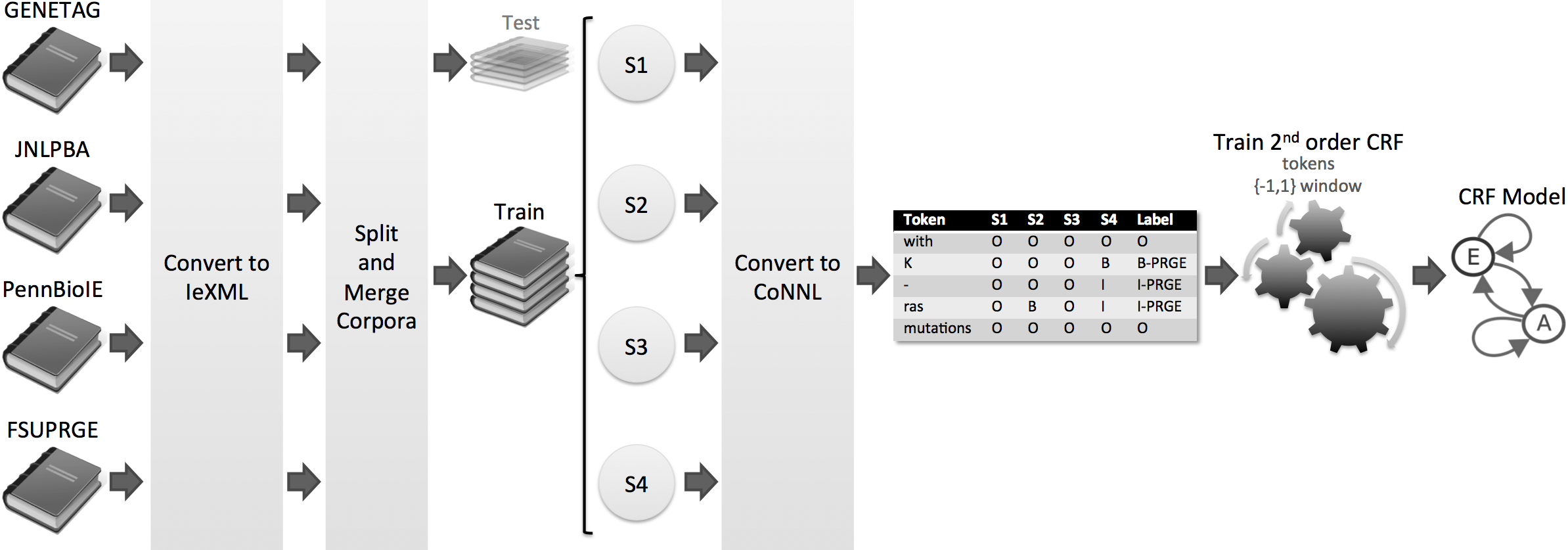

About

- Machine Learning: Conditional Random Fields (CRFs);

- Features: orthographic, morphological, linguistic parsing and conjunctions;

- Combination: combination of models with different orders and parsing directions;

- Post-processing: parentheses correction and abbreviation resolution.

- David Campos, Sérgio Matos, José Luís Oliveira. Gimli: open source and high-performance biomedical name recognition. BMC Bioinformatics, vol. 14, no. 1, p. 54, February 2013

Download

Documentation

Join us

david.campos(a)ua.pt

david.campos(a)ua.ptTeam

- David Campos, david.campos(at)ua.pt

- Sérgio Matos, aleixomatos(at)ua.pt

- José Luís Oliveira, jlo(at)ua.pt

Problem

The recognition of named entities is a crucial initial task of biomedical text mining. A number of NER solutions have been proposed in recent years, taking advantage of different resources and/or techniques. Currently, the best results are achieved by combining the output of different systems. However, little effort has been spent in such harmonisation solutions, being specific to a corpus and/or non-knowledge based.

Features

- Knowledge-based harmonisation

- Correct, remove and create annotations

- Support several biomedical domains and organisms

- On-demand harmonisation

- Support both NER and normalisation systems

- Automated scripts for simple usage

- Java library for advanced users

- Input and Output in IeXML format

Method

Totum is a innovative harmonisation solution based on Conditional Random Fields, which were trained on several manually curated corpora. Thus, we avoid the single corpus dependency, supporting several biomedical domains and organisms. In the end, Totum harmonises gene/protein annotations provided by several heterogeneous NER solutions, following the gold standard requirements.

Results

Considering a corpus that contains the test parts of the four corpora, the experiments show that Totum improves the F-measure of state-of-the-art tagging solutions by up to 10% in exact alignment and 22% in nested alignment. Finally, Totum achieves an F-measure of 70% (exact matching) and 82% (nested matching) against the same corpus.

Used tools

- MALLET: framework for statistical natural language processing, providing a Conditional Random Fields implementation;

- Apache OpenNLP: tokenisation and respective model;

- IeXML: annotation guidelines and associated library;

- monq.jfa: fast and flexible text filtering with regular expressions.

Publication(s)

- David Campos, Sérgio Matos, Ian Lewin, José Luís Oliveira, Dietrich Rebholz-Schuhmann. Harmonisation of gene/protein annotations: towards a gold standard MEDLINE. Bioinformatics, vol. 28, no. 9, p. 1253-1261, March 2012. doi:10.1093/bioinformatics/bts125

Team

- David Campos, david.campos(at)ua.pt

- Sérgio Matos, aleixomatos(at)ua.pt

- Ian Lewin, lewin(at)ebi.ac.uk

- José Luís Oliveira, jlo(at)ua.pt

- Dietrich Rebholz-Schuhmann, rebholz(at)ebi.ac.uk

COEUS main web server is down for maintenance. It will be online again on February 27th, 2013. Thank you for your patience.

Ipsa scientia potestas est. Knowledge itself is power.

Streamlined back-end framework for rapid semantic web application development.

Get it Here

GitHub project

Integration

Create custom warehouses, integrating distributed and heterogeneous data.

Integrate CSV, SQL, XML or SPARQL resources with advanced Extract-Transform-Load warehousing features.

Cloud-based

Deploy your knowledgebase in the cloud, using any available host.

Your content – available any time, any where. And with full create, read, update, and delete support.

Semantics

Use Semantic Web & LinkedData technologies in all application layers.

Enable reasoning and inference over connected knowledge.

Access data through with LinkedData interfaces and deliver a custom SPARQL endpoint.

Rapid Dev Time

Reduce development time. Get new applications up and running much faster using the latest rapid application development strategies.

COEUS is the back-end framework, the client-side is language-agnostic: PHP, Ruby, JavaScript, C#… COEUS’ API works everywhere.

Interoperability

Use COEUS advanced API to connect multiple nodes together and with any other software.

Create your own knowledge network using SPARQL Federation enabling data-sharing amongst a scalable number of peers

Ecosystem

Launch your custom application ecosystem. Distribute your data to any platform or device.

Reach more users and create new semantic cloud-based software platforms.

The Human Variome relates to genomic mutations and their effects on particular phenotypes. This critical life sciences research field has grown greatly in recent years, mostly due to the appearance of projects such as the Human Variome Project or the European GEN2PHEN Project. Nonetheless, locus-specific mutation databases and included variants are far from being standardized and widely used in the research community workflow. With WAVe, we offer centralized and transparent access to these databases, combined with the integration of found variants in a single system that is enriched with the most relevant gene-related information in a user-friendly web-based workspace.

https://bioinformatics.ua.pt/WAVe

Features

WAVe provides a comprehensive set of features that will improve bioligists’ workflow when researching in the genomic variation field.

Search

Searching for genes only requires that users start typing the gene HGNC-approved symbol in any of the available search boxes. This event will trigger the automatic suggestion system that will offer various solutions based on users’ input. Following one of the suggestions leads directly to the gene view interface. When a suggestion is not accepted and there is more than one match, WAVe will display the gene browse interface, containing only the results matching the provided query.

Browse

Querying for * lists all genes as well as available LSDBs and variants for each gene. In this gene browse scenario, searches for a particular gene can be performed, in real time, by typing in the table search box. By clicking in one of the genes, users are sent to the gene view interface.

View

The gene view interface is the main WAVe workspace. The layout is divided in two main areas: the sidebar and the content zone. The sidebar displays minimal gene information on top – gene HGNC symbol, name and locus – and the navigation tree, which is WAVe’s user interface key element, at the bottom. The navigation tree is organized in nodes, each referring to a distinct data type: each node leaf links directly to a page containing information regarding a specific topic. Pages linked in each leaf appear in the content zone. This enables loading external applications without leaving WAVe’s interface and, thus, without losing focus with ongoing research.

API

Programmatic access to data is also available. The gene tree is available as an easily-parsable feed. Feeds are obtained by appending the atom tag (or other format: rss, json) to the end of the gene view address. For instance, BRCA2 Atom feed is available at https://bioinformatics.ua.pt/WAVe/gene/BRCA2/atom .

WAVe also provides an RSS API for variant access. With this, you have programmable access to all available variants for a given gene. For instance, BRCA2 variants (from multiple LSDBs) are at https://bioinformatics.ua.pt/wave/variant/BRCA2/atom. In addition to the variant description, WAve points to the original LSDB containing the variant.

This WAVe makes WAVe the only platform capable of providing aggregated variant listings through both visual and programmable access.

Feedback

We highly appreciate any feedback you can provide regarding WAVe and the genomic variation field. To do this, you can simply send an e-mail to pedrolopes@ua.pt. Thank you.

Daniel Sobral, “Ensembl Regulation”

Ensembl is a world reference for vertebrate genome annotation, providing high quality annotation for more than 50 species. Particularly challenging is the annotation of non-coding functional regions of the genome. Ensembl Regulation aims at making Ensembl

a reference for the annotation of genomic features with a potential role in the transcriptional regulation of gene expression. Combining publicly available data from large projects like ENCODE and The Epigenomics Roadmap, we group overlapping areas of open chromatin and transcription factor binding to build a “best-guess” set of regulatory features, in a cell-aware manner. Finally, we also include histone-modification and polymerase data to generate cell-specific classifications for the regulatory regions. Taking advantage of the role of the EBI as part of the ENCODE data analysis group, we aim at bringing Ensembl to the forefront of the annotation of the regulatory genome.

Daniel Polónia, “An electronic market for teleradiology services”

José Paulo Lousado, “Pattern analysis on DNA primary structure”

Funding entity: FP7-ICT (STREP)

Period: 2008-2012

The overall objective of this project is the design, development and validation of a computerized system that exploits data from electronic healthcare records and biomedical databases for the early detection of adverse drug reactions.

Genomic Name Server

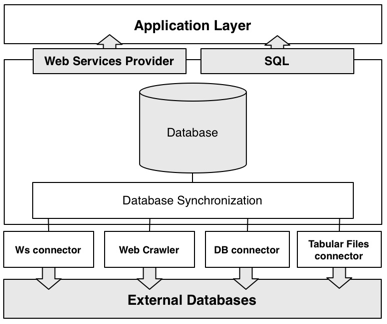

The integration of heterogeneous data sources has been a fundamental problem in database research over the last two decades. The goal is to achieve better methods to combine data residing at different sources, under different schemas and with different formats in order to provide the user with a unified view of the data. Although simple in principle, due to several constrains, this is a very challenging task where both the academic and the commercial communities have been working and proposing several solutions that span a wide range of fields. However, the limitations found on most solutions reflect the difficulty to obtain a simple but comprehensive schema able to accommodate the heterogeneity of the biological domain while maintaining an acceptable level of performance: GeNS is our proposal towards solving this issue.

Installing and using GeNS

The Genomic Name Server can be either downloaded and installed on a local computer or accessed by Web Services. Please keep in mind that GeNS currently requires over 10 GB of disk space and this figure is likely to increase in the near future. Therefore, if disk space is a serious restriction you should consider using the available Web Services. We are currently using

Microsoft SQL Server 2008 but GeNS can be set up in any other DBMS.

a) Setting up a local instance of GeNS

- Download either the full backup of the database (here) or a dump of all the tables (available here): Last update: 24/11/09

- Once inside your DMBS, simply restore the full backup of the database (this is for MS SQL Server 2008 only; a step-by-step walkthrough can be found here) or import the data from the tables to the database.

- Congratulations! GeNS is now ready to be used.

b) Using the Web Services

The Web Services are now available here. Furthermore, a detailed description is also available here (Updated March 24).The Web Services API is in an early stage of development and, as such, users should bear in mind that certains problems may arise during it’s usage.

Advantages

- Easy to understand and use

- Flexible and scalable

- Efficient

- Accessible by several methods

- Improves the cross-database low identifier coverage issue

Architecture

GeNS uses four distinct methods for gathering data from external databases: by Web Services, web crawlers, database connectors and finally by tabular files connectors. All of the recovered data is subsquently processed and synchronized to our database. Finally, the data can be accessed via Web Services or by downloading, installing and querying the data with SQL.

Currently, GeNS is importing data from four major databases: UniProt (SwissProt and TrEMBL), KEGG, EMBL – EBI and Entrez. Since these databases already incorporate data from third-party databases, we have over 460.000 unique genes, more than 100.000 biological relations and a hundred and forty distinct datatypes.

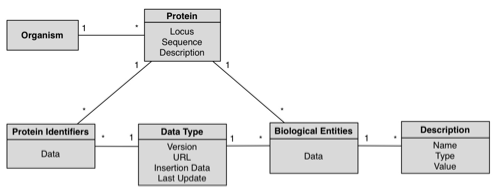

Database

GeNS database was designed with simplicity and extensibility in mind; the following schema is a complete representation of the database.

Concepts:

- Organism: An individual form of life capable of growing, metabolizing nutrients, and usually reproducing. Organisms can be unicellular or multicellular. The Organism table stores taxonomic information; each entry corresponds to an organism with any given number of associated proteins. This table is the root of the hierarchical model. For each organism, we store its scientific and short names.

- Protein: Any of a group of complex organic macromolecules that contain carbon, hydrogen, oxygen, nitrogen, and usually sulfur and are composed of one or more chains of amino acids. The Protein table is where the proteins’ internal identifiers and gene locus are stored; each entry in this table has a referring organism (in which this protein is found) and may have any number of associated biological entities and/or equivalent external databases’ protein identifiers in the ProteinIdentifier and BioEntity tables.

- ProteinIdentifier: The table in which the mapping between the external databases’ protein identifier and BioPortal’s

internal identifier is made. - BioEntity: A table that stores all the biological entities associated with a given protein; this includes,

among other things, pathways and gene ontologies. - DataType: A table listing all the possible external databases from which the biological data may come from; each entry in the ProteinIdentifier and BioEntity tables references this

table, so that we may easily determine the nature (and source) of the

data.

Reproducing the results

The following files allow anyone to reproduce the obtained results regarding the cross-database low identifier coverage issue and the

performance testing queries. You will need a working copy of GeNS in order to use these scripts.

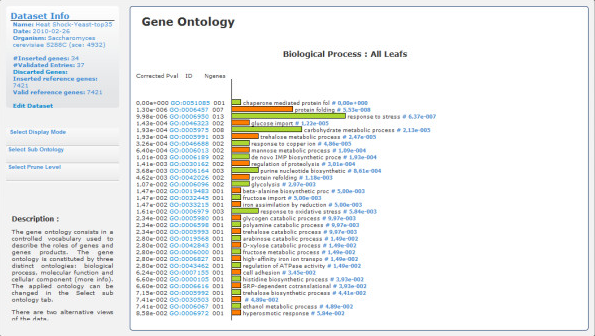

GeneBrowser is a web-based tool that, for a given list of genes, combines data from several public databases with visualisation and analysis methods to help identify the most relevant and common biological characteristics. The functionalities provided include the following: a central point with the most relevant biological information for each inserted gene; a list of the most related papers in PubMed and gene expression studies in ArrayExpress; and an extended approach to functional analysis applied to Gene Ontology, homologies, gene chromosomal localisation and pathways.

Although GeneBrowser can be used to answer many different biological questions, a particular question set was used to tune its development:

- What public databases provide relevant information about my dataset and how can I navigate through them?

- What biological processes are enriched with respect to my input list of genes?

- What are the most relevant metabolic pathways that contain my genes?

- What are the genomic regions of these genes?

- Which are the most relevant homologue classes in my list of genes?

- What gene expression experiments have been previously conducted with the same genes?

- What are the most relevant publications associated with my study?

Feedback

We highly appreciate any feedback you can provide regarding GeneBrowser. jpa@ua.pt. Thank you.

Reference

J. Arrais, J. Fernandes, J. Pereira and J. L. Oliveira, Exploring and identifying common biological traits in a set of genes, BMC Bioinformatics, BMC Bioinformatics 2010, 11:212 (link)

Dicoogle is an open-source Picture Archiving and Communications System (PACS) archive. Its modular architecture allows the quick development of new functionalities, due to the availability of a Software Development Kit (SDK).

Dicoogle starts by indexing DICOM files and metadata, both locally and in distributed systems using a P2P communication framework. Upon this distributed index users can then search for exams or specific features using a free text interface.

What is QuExT?